The standard search algorithm is alpha-beta pruning, and it was used in the earliest chess computers. A good search algorithm cuts down all these "branches" since they're irrelevant anyway. Almost all of the time, this is wasted processing power. For a computer however, it will evaluate every possible response to QxQ. If for example I take your queen with my queen, a human opponent will automatically look first at recapturing. As I understand it, there are two aspects of algorithms: The algorithms got better, and today's best engines running on 1995 (remember Deep Blue was 1999) hardware will handily beat Kasparov. Perhaps also worth giving the general Stockfish download link since the specific source code link will likely expire when version 9 comes out. Nevertheless the improvements are mostly open source and freely visible by downloading the sources for engines like Stockfish. Well, it wasn't the former, it was the latter. If the former, are these algorithmic improvements public?Īnd if so, what were the improvements? Where can I read about them? So, far and away the biggest improvement in engine performance is due to faster hardware. That makes modern processors somewhere in the region of 8,000 times faster. That means it has doubled roughly 13 times in 20 years. Moore's Law tells us that processor speed will double roughly every 18 months. Minor nit: If the algorithms got better then that is the software getting better so there is no "or". Or were the improvements mostly due to the same algorithms running faster Then the generation of a sliding move is as easy as looking up the validity of it's mask in it's allowable offsets against the move table. The table holds a value which tells if the 16 moves are possible, one index holds the offset of the move, and the other holds the mask. I took this idea and compressed it to two indexes and a table. The take the six pieces and compute the legal moves that are available from each square. They initialize the engine by filling array with possible moves. GNUChess and Jester both use an index array to generate their moves.

There is also the internal iterative deepening search which tries to search the "best" move(s) the deepest hoping that searching other moves will prove to be fruitless. They reduced the number of moves being searched by transposition table, cut-off values, aspiration windows, and history heuristics. Basically were you try to maximize the score of the side to move and minimize the opponent's score. The early search function was the primitive min-max functions. Robert Hyatt, professor and creator of cratfy chess engine, claims no significant speed increase. Current opinion is that bitboards are the faster, and using magic bitboards speed this by up to 30%. The most common generation functions are mailbox, bitboard, 0x88, 8x8, extended boards (10x10, 10x12), and a predetermined move array/table (*I use an indexed move table). Move generation, along with making and unmaking a move, consumes a lot of memory because it has to be preformed so many times. With hard drive space getting cheaper, the eval function allows for more exceptions to be evaluated. There are 3 main functions that are tweaked to improve a chess engine are the evaluation, move generation, and search functions.Įvaluation is the hardest to program, as there are many exceptions to the rules. is a great source, but it's hard to navigate.

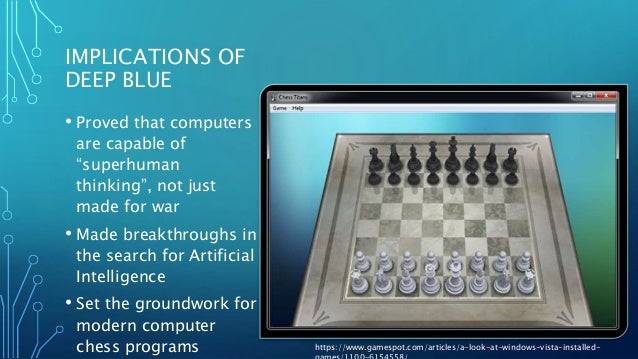

Deep Blue used multi-processor dedicated computers, so a comparison isn't really possible. I can't speak for the algorithm used for Deep Blue, but I'm going to try and explain the improvements in chess programming. The Stockfish engine is open source, so the algorithmic improvements are public. On the other hand, you could argue that parallel computing is also a software gain: it is not easy to design and implement an efficient and well-scaling parallelization for the search algorithm. As a result, the hardware gain by parallel machines is not measured. In the test, Stockfish (2017) scored an impressive 94/100 against RobboLito (2009), while RobboLito, on its turn, crushed Shredder (2002) with 92/100.Īn important remark: as parallel computing is not implemented in the older engines, the test was performed on a single core. The test suggests that in the recent years (2002-2017), the gain is mainly made by software improvements. I found a recent thread that might be interesting for you: Progress in 30 years by four intervals of 7-8 yearsĪ couple of matches between (former) top engines are played on the same hardware. Maybe you can take a look at TalkChess, a forum dedicated to computer chess.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed